Responsible AI in Hiring, Why Governance Must Be Engineered, Not Documented

Responsible AI in hiring requires engineered governance not just policies through bias monitoring, audit logs, and transparency

Every AI vendor in the HR technology space has a Responsible AI page on their website. Most of them contain the same three commitments: fairness, transparency, and human oversight. They are written by policy teams. They are reviewed by legal counsel. And they bear approximately zero relationship to how the underlying models actually make decisions.

This is the governance problem that enterprise buyers have started to recognize and that regulators in the EU, the United States, and across the Asia-Pacific are beginning to codify into law.

A Responsible AI policy is not governance. It is aspiration. Real governance lives in the architecture.

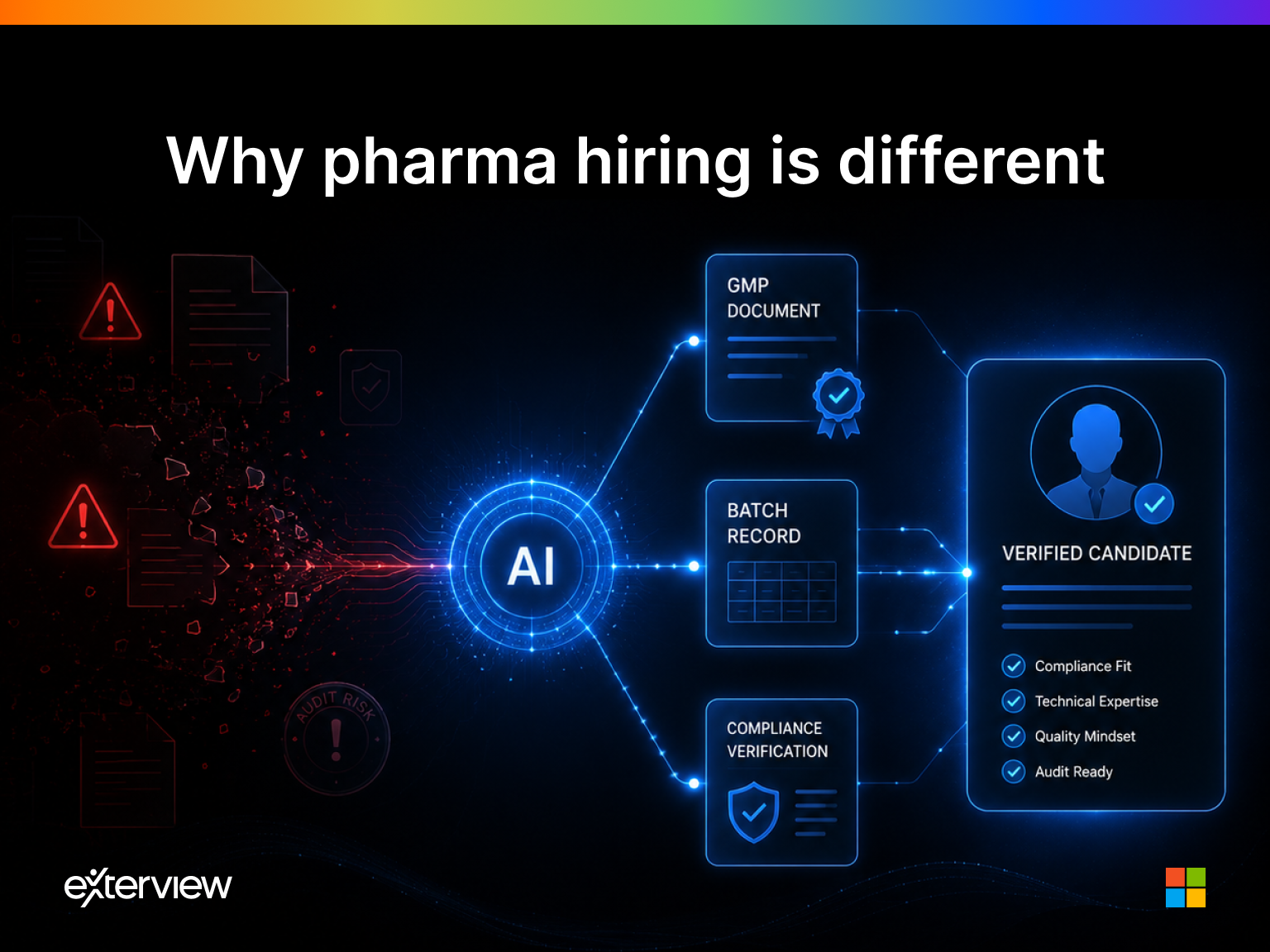

Why AI Hiring Tools Require a Different Standard

AI tools in most enterprise contexts carry contained risk. A demand forecasting model makes a bad prediction. An inventory optimization system misallocates stock. These failures are costly. They are recoverable.

AI tools in hiring operate in a fundamentally different risk environment. When an AI system makes a biased evaluation, or produces an opaque score that disadvantages a protected class, or advances candidates based on spurious correlations in historical hiring data, the consequences fall directly on human beings. Employment exclusion. Opportunity denial. Career impact that can extend for years.

This is why the standard for AI governance in hiring must be higher than the standard applied to operational enterprise AI. And why documentation of intent is a grossly insufficient substitute for engineered accountability.

The Four Pillars of Engineering Responsible AI

1. Explainable Scoring

Every candidate evaluation score produced by an AI system must be explainable in terms that a non-technical reviewer, a recruiter, a hiring manager, a candidate, or an auditor can understand and verify.

"The model assessed this candidate as 73/100 for the technical competency requirement" is not explainable.

"The candidate's responses to the three system design questions demonstrated intermediate proficiency in distributed architecture and foundational understanding of data consistency models, resulting in a score of 73 against a role requirement of 80" is explainable.

The difference is not cosmetic. It is the difference between a hiring system that can be scrutinized and one that is a black box with a numerical output.

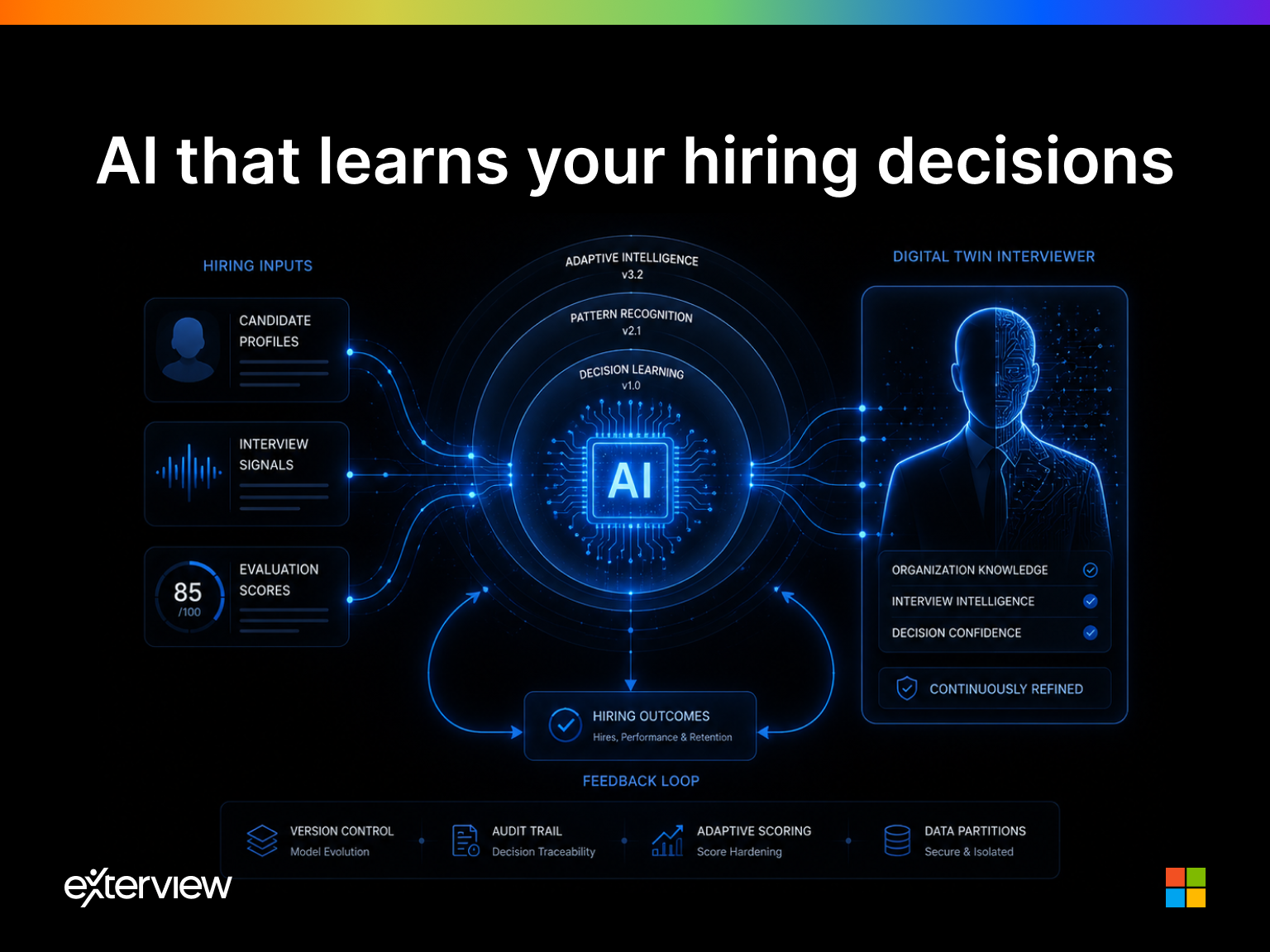

Exterview's evaluation architecture is designed to generate human-readable explanations for every score, at every stage, for every candidate. This is not a reporting feature. It is an architectural requirement.

2. Bias Monitoring and Correction

AI models trained on historical hiring data will, without active intervention, encode the biases present in that historical data. If your organization historically hired fewer women for engineering roles, a model trained on your historical hiring outcomes will learn implicitly that women are less suitable candidates for engineering roles.

This is not a theoretical risk. It is a documented pattern across multiple enterprise AI hiring tools that were deployed without systematic bias monitoring.

Responsible governance requires:

- Ongoing demographic impact analysis: measuring whether the AI's evaluations produce statistically significant differences in outcomes across protected classes

- Training data audits: identifying and correcting for historical biases encoded in the data used to train or calibrate evaluation models

- Adversarial testing: deliberately testing evaluation models against synthetic candidate profiles designed to surface differential treatment by gender, race, age, disability status, or other protected characteristics

- Documented correction protocols: when bias is detected, a defined process for model adjustment, re-evaluation of affected candidates, and stakeholder notification

Exterview maintains continuous bias monitoring across all evaluation dimensions, with automated flagging of statistical anomalies that warrant human review.

3. Audit Logging

Enterprise governance requires that every AI decision touching a human employment outcome be logged in sufficient detail to support retrospective review, regulatory inquiry, and legal defense.

Audit logging in hiring AI means:

- Recording every input that contributed to every evaluation score

- Preserving the model version that generated each score, so score recalculation with updated models is possible

- Timestamping every evaluation event with the actor, system, and context that produced it

- Maintaining immutable records that cannot be altered after the fact

This is not operational overhead. For any organization facing an employment discrimination claim, a regulatory audit, or an internal governance review, complete audit logging is the difference between a defensible record and an indefensible one.

4. Transparent Decision Models

Hiring managers and recruiters who use AI evaluation tools must understand what the tool is evaluating, what it is not evaluating, and where its outputs should and should not influence human decision-making.

Transparency does not mean exposing proprietary model architecture. It means providing users with a clear model card: what signals are assessed, how they are weighted, what the model has and has not been validated against, and what human oversight is required before any AI recommendation becomes a hiring decision.

The Regulatory Horizon

The regulatory landscape for AI in hiring is evolving rapidly and consistently toward greater accountability:

- The EU AI Act classifies employment-related AI as high-risk, requiring conformity assessments, bias testing, and transparency obligations before deployment

- New York City Local Law 144 requires bias audits for automated employment decision tools used by employers in NYC, with public disclosure of audit results

- Colorado, Illinois, and Maryland have enacted or are advancing legislation requiring disclosure and candidate notification when AI is used in hiring

Organizations that have not built governance into their AI hiring architecture and relied instead on policy documentation will face compliance exposure as these regulations take effect.

The organizations that engineered responsible AI from the beginning will find compliance straightforward, because what regulators are requiring is exactly what they already built.

The Competitive Dimension

There is a procurement dimension to this as well. Enterprise buyers particularly in regulated industries such as financial services, healthcare, and government contracting are increasingly requiring vendors to demonstrate, not merely assert, AI governance capability.

A Responsible AI policy document does not satisfy a security questionnaire that asks for audit log format specifications. A documented commitment to fairness does not answer a procurement requirement for demographic impact analysis methodology.

The vendors that win enterprise deals in AI hiring will be the ones who can produce governance artifacts on demand. Because governance was engineered in, not added as an afterthought.

Exterview is built to the governance standard that enterprise hiring requires explainable, auditable, and continuously monitored. Because responsible AI is not what you write. It is what you build.