The 9-Agent Architecture Reshaping Enterprise Hiring

Multi-agent AI architecture enables scalable, auditable hiring decisions

The 9-Agent Architecture Reshaping Enterprise Hiring

The history of enterprise software is a history of monoliths giving way to modular systems. ERP gave way to best-of-breed. Mainframes gave way to microservices. Monolithic AI models are giving way to multi-agent pipelines.

In talent acquisition, this transition is just beginning. And the companies that understand the architectural shift early will build the platforms that define the next decade of enterprise hiring.

The question is not whether AI will run hiring evaluations. That question is settled. The question is how and specifically, whether the system doing it is a single model pretending to be a pipeline, or an actual pipeline of specialized agents, each owning a discrete function, each auditable, each improvable independently.

The answer matters more than most people realize.

Why a Single Model Is Not Enough

The first generation of AI hiring tools was built around a single model doing many things: reading resumes, scoring responses, generating questions, providing recommendations. This approach works at demonstration level. It fails at enterprise scale.

The reason is specialization. A model asked to simultaneously assess technical competency, evaluate communication patterns, detect response inconsistencies, apply role-specific benchmarks, and generate a structured recommendation is doing too many things with too few guardrails. Each task requires different evaluation logic, different training signal, different confidence thresholds, and different audit requirements.

When a single model fails in this context, the failure is opaque. You cannot identify which function produced the error. You cannot retrain one component without retraining all of them. You cannot meet enterprise audit requirements because you cannot explain which part of the system made which decision.

Modular, multi-agent architecture solves all of these problems. Not elegantly, It introduces its own coordination complexity. But correctly.

The Nine Functions of a Complete Evaluation

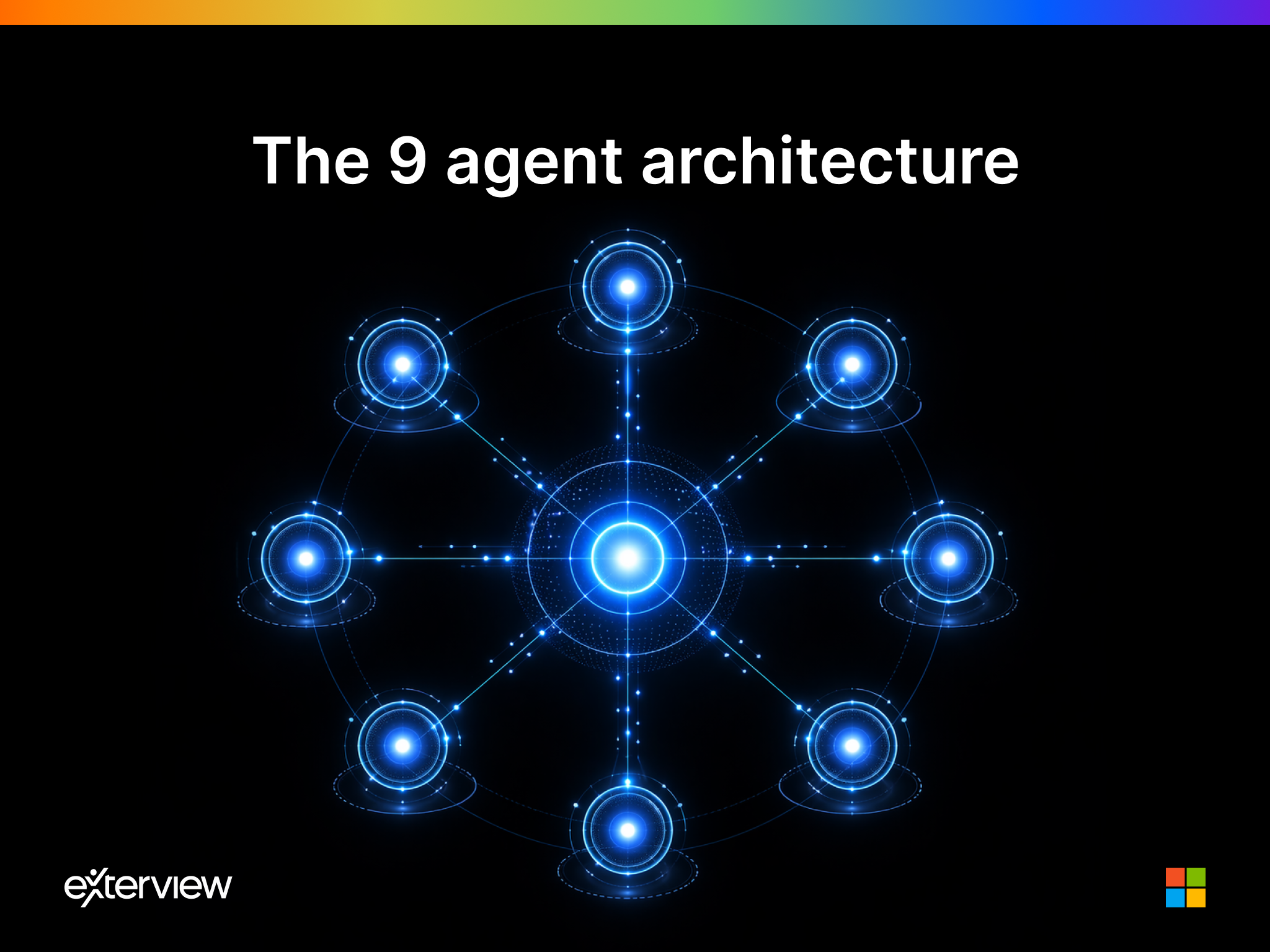

A full-cycle hiring evaluation, done properly, requires nine distinct cognitive functions. Each can be owned by a specialized agent. Each can be independently validated, audited, and improved.

Agent 1: Assessment Generator. Takes a job description, role requirements, and organizational context as inputs. Outputs a calibrated assessment framework, the specific competencies to evaluate, the behavioral indicators to look for, and the question set designed to surface them. This agent is trained on role taxonomy and competency frameworks, not on conversation.

Agent 2: Pre-Screening Coordinator. Conducts the initial candidate qualification pass, verifying basic eligibility, collecting structured background information, and flagging candidates for routing decisions. Reduces recruiter time on unqualified volume without eliminating human judgment from meaningful decisions.

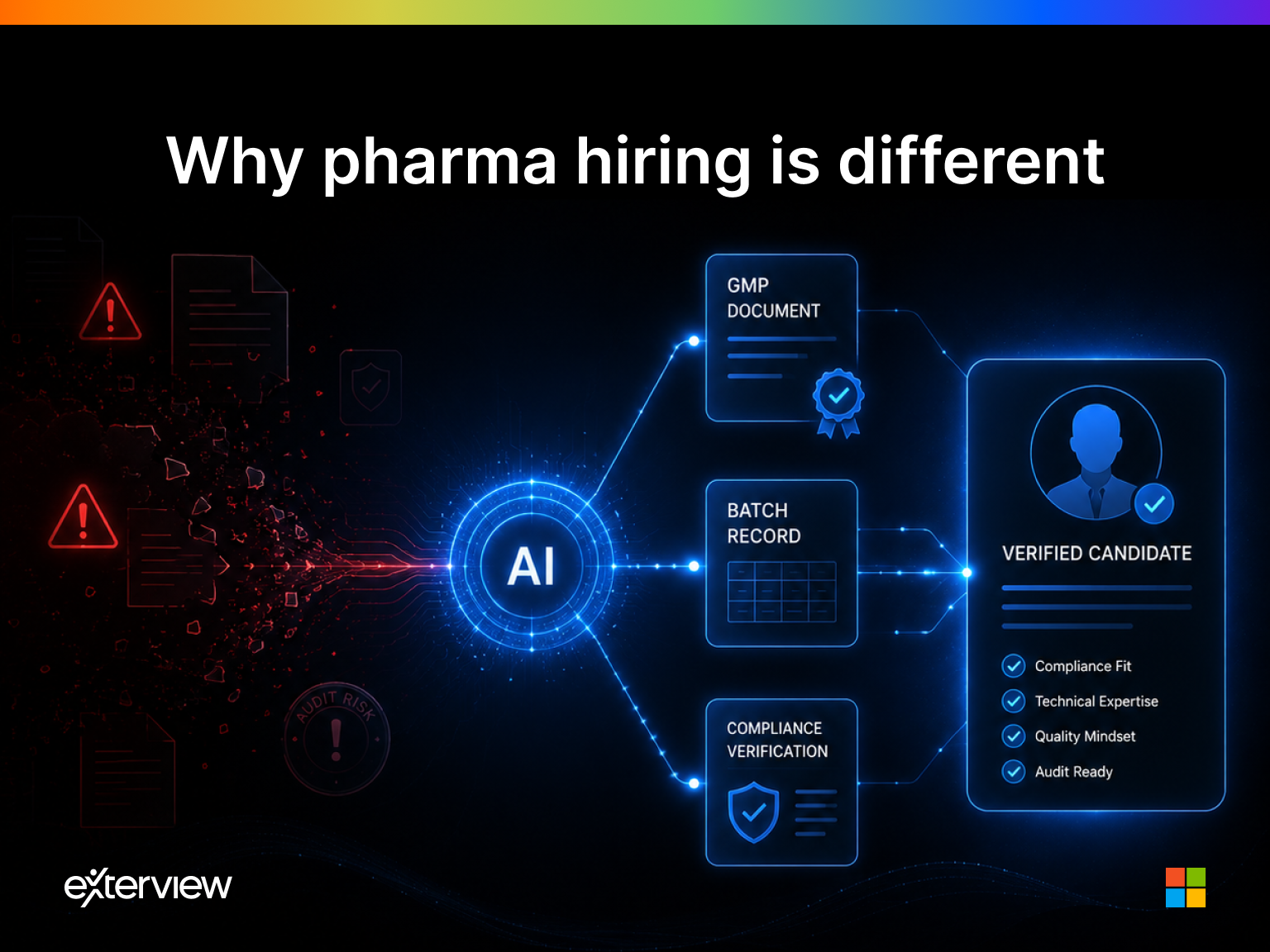

Agent 3: Technical Evaluator. For roles with a technical component, this agent administers and scores domain-specific assessments, coding challenges, case analyses, domain knowledge probes. Operates with role-specific rubrics that are versioned and auditable.

Agent 4: Behavioral Interviewer. Conducts the structured behavioral interview, the STAR-format competency questions that predict job performance better than any other conversational format. Follows a consistent protocol for every candidate, every time, regardless of volume.

Agent 5: Communication Analyst. Evaluates the quality of communication independently of content clarity, structure, confidence calibration, articulation under pressure. This signal is often the difference between two technically equivalent candidates.

Agent 6: Anti-Gaming Detector. Identifies response patterns that suggest coaching, scripted answers, or inconsistency between stated experience and demonstrated knowledge. This agent protects signal integrity without it, a well-coached mediocre candidate can defeat the evaluation.

Agent 7: Bias Normalizer. Applies statistical controls to ensure that evaluation outputs are not systematically influenced by demographic proxies. This is the compliance engine, the component that makes every hiring decision defensible under legal scrutiny.

Agent 8: Benchmark Comparator. Positions each candidate’s scores against the relevant population: prior successful hires in the role, industry percentiles, internal top performer profiles. Converts raw scores into relative signal.

Agent 9: Recommendation Synthesizer. Aggregates outputs from all upstream agents, applies final weighting logic, and generates a structured recommendation with supporting evidence. This is the output the human hiring manager sees not a score, but a reasoned recommendation with an audit trail.

Why Modularity Is a Strategic Advantage

The nine-agent architecture is not just a technical design choice. It is a competitive moat.

Each agent can be retrained on new data without affecting the others. When a customer’s role taxonomy evolves, the Assessment Generator updates independently. When a new form of gaming behavior emerges, the Anti-Gaming Detector is retrained without touching the Behavioral Interviewer.

This composability means the platform improves faster than a monolithic competitor. It also means the platform can be audited granularly, a requirement that enterprise procurement teams and legal departments are beginning to mandate explicitly.

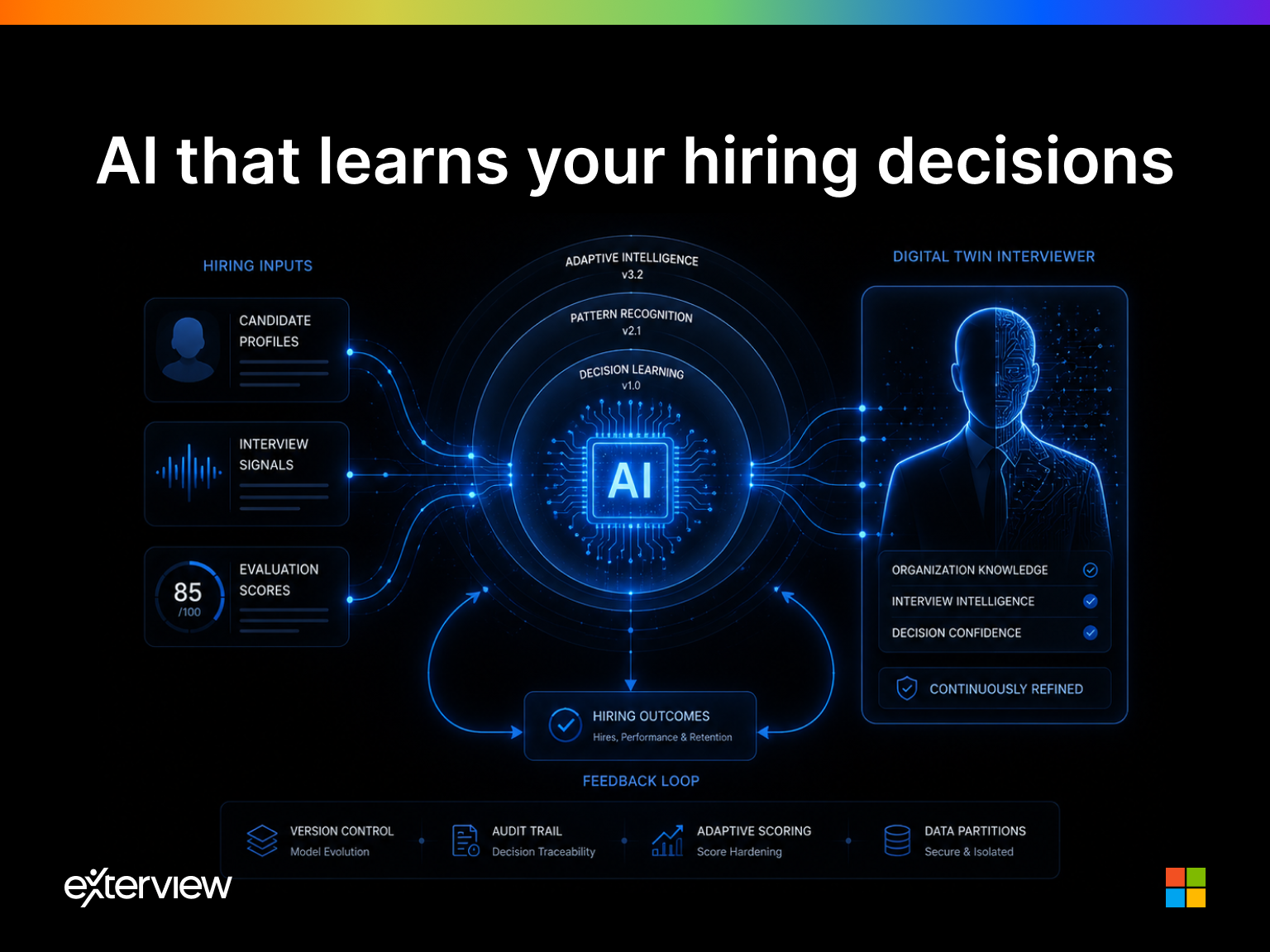

Perhaps most importantly, it means the data moat compounds by component. Each agent accumulates its own training signal, the Assessment Generator learns what predicts role success, the Communication Analyst learns what communication patterns predict retention, the Anti-Gaming Detector learns new evasion techniques as they emerge. These are separate, compounding advantages that a monolithic competitor cannot replicate by adding more parameters to a single model.

The Coordination Problem and How to Solve It

The objection to multi-agent architectures is real: coordination is hard. Agents must pass structured state between them. Failures in one agent must be handled gracefully by downstream agents. Latency accumulates across a pipeline in ways it does not in a single model call.

These are engineering problems, not conceptual problems. They are solved with orchestration frameworks, LangGraph, AutoGen, and purpose-built enterprise agent runtimes that manage state, handle failures, and enforce SLAs across the pipeline. The engineering investment is significant. The resulting architecture is defensible in ways that no monolithic system can match.

Enterprise buyers are beginning to understand this distinction. When a CHRO asks “how does your system make this recommendation,” the answer “one model analyzed everything” is increasingly insufficient. The answer “eight specialized agents contributed scored outputs that a synthesis agent combined according to this documented logic” is what audit committees and legal teams are starting to require.

Building the Category Standard

Every durable software category has a reference architecture, a structural model that defines what the product must be to be taken seriously. Zero Trust defined the perimeter-less security model. Data lakehouse defined the unified analytics architecture. Agentic Talent Intelligence is defining the nine-function evaluation pipeline.

Companies that build on this architecture are building to the category standard. Companies that build monolithic wrappers are building products that will need to be rebuilt.

The architecture is the strategy.

Exterview’s nine-agent architecture is the reference implementation for Agentic Talent Intelligence, a modular, auditable, enterprise-grade evaluation pipeline that replaces the unstructured interview with calibrated, explainable hiring signal.